AI Carbon Footprint Calculation: Measuring and Managing AI’s Environmental Impact

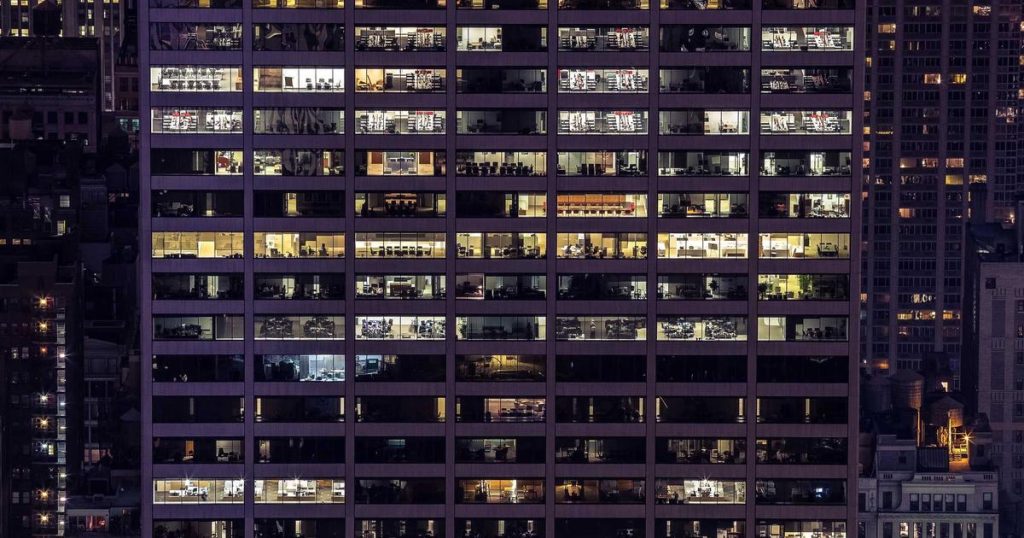

Introduction As artificial intelligence permeates every sector of the economy and society, questions about its environmental impact have moved from academic curiosity to urgent practical concern. Training large language models, running inference at scale, and maintaining the data center infrastructure that powers AI systems all consume substantial energy and generate greenhouse gas emissions. Yet for